|

| Recent Posts | - May, 2025-5,(1)

- April, 2025-4,(1)

- January, 2025-1,(1)

- July, 2024-7,(1)

- May, 2024-5,(2)

- May, 2023-5,(1)

- February, 2023-2,(1)

- November, 2022-11,(1)

- July, 2022-7,(2)

- March, 2022-3,(1)

- November, 2021-11,(2)

- August, 2021-8,(2)

- July, 2021-7,(2)

- June, 2021-6,(1)

- May, 2021-5,(1)

- March, 2021-3,(1)

- February, 2021-2,(2)

- January, 2021-1,(7)

- December, 2020-12,(3)

- March, 2020-3,(2)

- February, 2020-2,(1)

- December, 2019-12,(2)

- November, 2019-11,(1)

- October, 2019-10,(1)

- September, 2019-9,(1)

- August, 2019-8,(1)

- May, 2019-5,(1)

- April, 2019-4,(2)

- March, 2019-3,(2)

- December, 2018-12,(1)

- November, 2018-11,(4)

- July, 2018-7,(1)

- May, 2018-5,(3)

- April, 2018-4,(2)

- February, 2018-2,(3)

- January, 2018-1,(3)

- November, 2017-11,(2)

- August, 2017-8,(1)

- June, 2017-6,(3)

- May, 2017-5,(5)

- February, 2017-2,(1)

- December, 2016-12,(1)

- October, 2016-10,(2)

- September, 2016-9,(1)

- August, 2016-8,(1)

- July, 2016-7,(1)

- March, 2016-3,(2)

- February, 2016-2,(3)

- December, 2015-12,(5)

- November, 2015-11,(5)

- September, 2015-9,(1)

- August, 2015-8,(2)

- July, 2015-7,(1)

- March, 2015-3,(2)

- February, 2015-2,(1)

- December, 2014-12,(4)

- July, 2014-7,(2)

- June, 2014-6,(2)

- May, 2014-5,(3)

- April, 2014-4,(3)

- March, 2014-3,(1)

- December, 2013-12,(2)

- November, 2013-11,(1)

- July, 2013-7,(1)

- June, 2013-6,(2)

- May, 2013-5,(1)

- March, 2013-3,(3)

- February, 2013-2,(3)

- January, 2013-1,(1)

- December, 2012-12,(3)

- November, 2012-11,(1)

- October, 2012-10,(1)

- September, 2012-9,(1)

- August, 2012-8,(1)

- July, 2012-7,(6)

- June, 2012-6,(1)

- April, 2012-4,(1)

- March, 2012-3,(3)

- February, 2012-2,(3)

- January, 2012-1,(4)

- December, 2011-12,(3)

- October, 2011-10,(3)

- September, 2011-9,(1)

- August, 2011-8,(10)

- July, 2011-7,(2)

- June, 2011-6,(7)

- March, 2011-3,(2)

- February, 2011-2,(3)

- January, 2011-1,(1)

- September, 2010-9,(1)

- August, 2010-8,(2)

- June, 2010-6,(1)

- May, 2010-5,(1)

- April, 2010-4,(3)

- March, 2010-3,(2)

- February, 2010-2,(3)

- January, 2010-1,(1)

- December, 2009-12,(3)

- November, 2009-11,(3)

- October, 2009-10,(2)

- September, 2009-9,(5)

- August, 2009-8,(3)

- July, 2009-7,(9)

- June, 2009-6,(2)

- May, 2009-5,(2)

- April, 2009-4,(9)

- March, 2009-3,(6)

- February, 2009-2,(4)

- January, 2009-1,(10)

- December, 2008-12,(5)

- November, 2008-11,(5)

- October, 2008-10,(13)

- September, 2008-9,(10)

- August, 2008-8,(7)

- July, 2008-7,(8)

- June, 2008-6,(12)

- May, 2008-5,(14)

- April, 2008-4,(12)

- March, 2008-3,(17)

- February, 2008-2,(10)

- January, 2008-1,(16)

- December, 2007-12,(6)

- November, 2007-11,(4)

|

|

|

|

Blog posts for the month of January,2009.

|

|

|

| Aaron, 1st to Get Stiches | 12/29/2008 10:43:19 AM |

Aaron James becomes the first to make the Emergency Room visit to get stiches.

Pushing a bike or running and trying to jump over a bike is how the story goes.

Oddly he seems to have actually enjoyed the experience!

|

| Add Remove Programs | 1/6/2009 7:46:43 AM |

Where did the add remove programs go in windows 2008 ?

Of course, it was renamed to "Programs and Features", still under control panel.

Also from Start --> Run --> appwiz.cpl

|

| Alter varchar to datetime | 1/14/2009 9:13:13 AM |

Altering a table column from varchar to datetime is pretty straight-forward in the SQL Server Management Studio (SSMS), until you look at the tsql generated. For many operations SSMS will generate a tsql script that will:

- create a temporary table

- drop all the foreign keys

- copy the data to the temporary table

- create the new table with the correct data type

- copy the data to the new table

- drop the temporary table

- add the foreign keys back

That is a lot of operations and on a really large table of millions of rows may take a very long time to complete.

SQLCricket comments that it is possible to change the options in SSMS to warn on table operations, i think this is only in sql 2K8.

SQLPuma comments that it is possible to change a varchar() to a datetime via tsql with an alter table alter column command.

In this particular case we were modifying a varchar(10) to a datetime. All the data was in a valid format. The easiest method is to alter the column, another method to complete this is to:

- rename the existing column (tmp_varchar etc)

- add a new column with the correct name

- update the new column (in batches if necessary)

- drop the original column (now with a tmp_name)

This is very quick, much safer operation and is much "nicer" to the database log file. Example of tsql is below: CREATE TABLE dbo.testing

(test_id bigint NOT NULL IDENTITY (1, 1) primary key,

some_dt varchar(10)

)

GO

insert into testing (some_dt) values ('2009-01-01')

insert into testing (some_dt) values ('2009-01-02')

insert into testing (some_dt) values ('2009-01-03')

insert into testing (some_dt) values ('2009-01-04')

go

EXECUTE sp_rename 'dbo.testing.some_dt',

'Tmp_some_dt', 'COLUMN'

GO

Alter Table testing

Add some_dt DateTime Default('1900-01-01') NOT NULL

GO

Update testing

Set some_dt = Convert(DateTime,Tmp_some_dt)

GO

Alter Table testing

DROP Column Tmp_some_dt

GO

select * from testing

go

--drop table testing

|

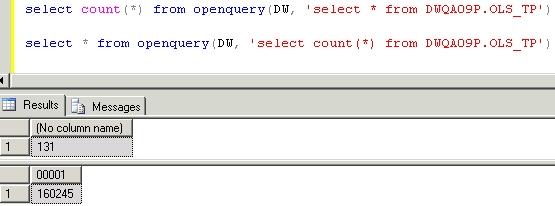

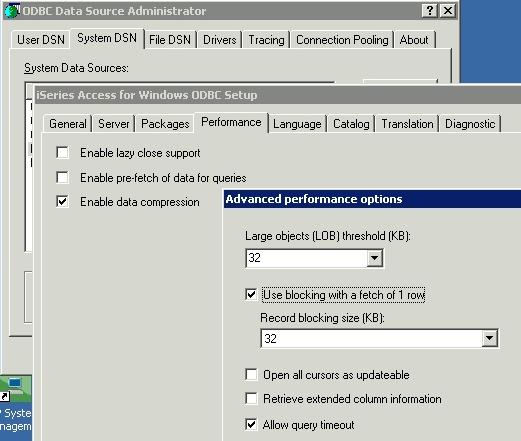

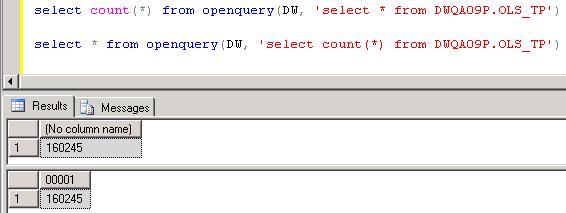

| AS400 Linked Server not returning all rows | 1/6/2009 10:00:00 AM |

Recently ran into an issue with a linked server between sql server and an as400, using the iSeries access driver via ODBC.

The linked server was not returning all the rows and it was not throwing any errors either.

There is an advanced settings option on the iSeries as400 setup that needs to be "unchecked", by default the option is checked and can lead to missing records.

Once it is unchecked, the query returns the correct counts or rows.

|

| DCOM Error 7404 | 1/19/2009 8:50:57 AM |

The server could not load DCOM. [SQLSTATE 42000] (Error 7404). The step failed.

Began receiving this error from failed sql server jobs on a sql server 2000 box and a windows 2000 box, after a reboot.

We didn't spend too much time researching the issue, as we were still in a maintenance window, so we performed another reboot and things worked fine.

I've seen many dcom errors over the years, but not this particular one from SQL Server, most likely com+ or ms dtc sub-systems (services) did not start or failed to start on the reboot. There were no system or event log entries that were immediately helpful, though there were other odd entries that seemed related to dcom not working:

The server {73E709EA-5D93-4B2E-BBB0-99B7938DA9E4} did not register with DCOM within the required timeout.

The NetBackup SAN Client Fibre Transport Service service failed to start due to the following error:

The service did not respond to the start or control request in a timely fashion.

Timeout (30000 milliseconds) waiting for the NetBackup SAN Client Fibre Transport Service service to connect.

BMSS failed to bind to a network interface card (NIC) because none was specified in the registry. Check the registry to make sure the network bind policy is correct. |

| isdate(), derived tables and CTE | 1/6/2009 3:24:16 PM |

UPDATE - 01/29/2009 - I just received a solution to this issue, that does not require a temp table, which was my wimpy solution, thanks to Shawn who left this great solution in the comments: Select *

From #checkDates

where convert(datetime,case isdate(rptDate) when 1 then rptDate else null end)

Between

'02/27/2009' and '02/28/2009'

The isdate() function does not work properly (or at least as I expect it would) when it is used inside a derived table or a Common Table Expression. This is really frustruating as it changes the way I think of derived tables and CTE's. I always assumed that the inner query or CTE was resolved first, but if you follow the TSQL below, you can see that is not the case.

This may affect other functions, isnumeric() or even custom functions, so test them carefully.

I was working on a report issue that was generating an invalid derived date from a varchar, 02/29/2009. Very simple to filter out, but due to the way the where clause is resolved / short circuited in tsql, it was going to be necessary to use a derived table or a CTE, but neither would work. I ended up resorting to using an additional temporary table (wimpy, wimpy), I don't like this solution and would like to find one that does not require the temporary table.

Here is the problem:

create table #checkDates

(chkDtId int identity(1,1) primary key,

rptDate varchar(10))

Insert into #checkDates (rptDate) values ('02/26/2009')

Insert into #checkDates (rptDate) values ('02/27/2009')

Insert into #checkDates (rptDate) values ('02/28/2009')

Insert into #checkDates (rptDate) values ('02/29/2009')

-- This fails below with invalid date

Select *

From #checkDates

where convert(datetime,rptDate) Between

'02/27/2009' and '02/28/2009'

-- put the isdate function as part of where clause

-- select statement still fails

Select *

From #checkDates

where isdate(rptDate) = 1 and

convert(datetime,rptDate) Between

'02/27/2009' and '02/28/2009'

-- switch to a derived table, the thought being to

-- remove the invalid dates from the inner select

-- somehow in my mind i think the inner query should be resolved first,

-- but this is not the case !

Select * from

( Select *

From #checkDates

where isdate(rptDate) = 1 ) x

where convert(datetime,rptDate) Between

'02/27/2009' and '02/28/2009'

-- wimpy solution is to select the "good" records into

-- a temp table and then perform the next select, yuck

-- BUT IT WORKS

Select * into #InterimResults

From #checkDates

where isdate(rptDate) = 1

Select * from #InterimResults

where convert(datetime,rptDate) Between

'02/27/2009' and '02/28/2009'

--

-- CTE SOLUTION, DOES NOT WORK EITHER

--

WITH goodDate_CTE AS (

Select *

From #checkDates

where isdate(rptDate) = 1

)

SELECT *

FROM goodDate_CTE

where convert(datetime,rptDate) Between

'02/27/2009' and '02/28/2009' |

| Mixing brackets with column list | 1/6/2009 3:33:54 PM |

I twisted my head around a select statement that wasn't returning what I wanted. Eventually I focused in on the column list, that had column names with spaces, so it required using brackets []. These brackets when mixed in the column list can lead to tsql parsing that is not expected. In this case I mixed some columns with brackets and some without, ultimately leaving a comma off.

You'd expect the tsql to not compile, but it does and runs with no errors. Once tsql encounters a column list with brackets, it parses based off the brackets and commas ignoring the additional field i wanted to include in the select list..

grrrr...

create table #testTable

(tableid int identity(1,1) primary key,

[login name] varchar(20),

[is_password_validation] int,

[is_password_expiration] int,

[login type] varchar(20))

Insert into #testTable ([login name],[is_password_validation],

[is_password_expiration],[login type] ) values ('User 1',0,0,'AD')

Insert into #testTable ([login name],[is_password_validation],

[is_password_expiration],[login type] ) values ('User 2',0,0,'AD')

Insert into #testTable ([login name],[is_password_validation],

[is_password_expiration],[login type] ) values ('User 3',0,0,'AD')

Insert into #testTable ([login name],[is_password_validation],

[is_password_expiration],[login type] ) values ('User 4',1,1,'SQL')

Insert into #testTable ([login name],[is_password_validation],

[is_password_expiration],[login type] ) values ('User 5',0,1,'SQL')

select * from #testTable -- good

select [login name],

is_password_validation -- NOTICE THE MISSING COMMA

[login type]

From #testTable

|

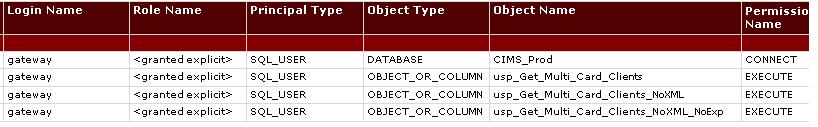

| Report of User Permissions | 12/31/2008 1:27:21 PM |

This is a custom report developed to list user permissions.

Often DBA's need a consolidated list of permissions for a user, this custom report does this. Developed by Tom Reeves.

Here's a report you can use to see permissions for a given user or role in SQL2005.

Save the attached file to the following location. This is where custom reports have to reside for them to work. Where you see "{user}" should be changed to whatever your username is.

C:\Documents and Settings\{user}\My Documents\SQL Server Management Studio\Custom Reports

User_Permissions.txt - Right click and save with a RDL Extension. (37.25 KB)

|

| SQL Server 2005 SP3 | 12/31/2008 11:07:16 AM |

Just a quick placeholder on the progress of SQL Server 2005 service pack 3.

This update is important, if nothing else to ensure your on the latest patch which addresses some security vulnerabilities.

There is also a cumulative update for service pack 3, generally I don't go down the cumulative update path unless i've been advise by Microsoft (ie, it addresses a problem being experienced) or the SQL Community in general highly recommends a specific CU.

I always start with patching my local developer edition, x86 Instance.

Slowly working through a few boxes, so far we've patched the following with no issues:

- 64 Bit named instances running under Polyserve 3.6.1 - both dev, qa and production, no issues, Enterprise Edition.

- 64 Bit named instance stand-alone server, Enterprise Edition.

- 64 Bit named instance stand-alone server, Standard Edition.

- 64 Bit Clustered SQL Server, Active / Active Configuration, no issues, did require reboot of both nodes and fail over, Enterprise Edition.

- 32 Bit SSIS stand alone installations (no dbms) no issues.

- 32 Bit Reporting Services Web Farm, authentication issues afterwards.

I had an issue with the Reporting Services Web Farm, which uses SQL Authentication for connectivity, caused the login to be disabled. I had quite a difficult time getting it re-enabled and changing the password, but no errors in applying the service pack. |

| WMI Mountpoints and enlightenment | 1/29/2009 12:33:07 PM |

Mountpoints are fun and easily can solve issues with more than 26 drive letters (don't ask), but recently we ran into several issues with monitoring the mountpoints. In particular we have a report that is based on past growth history of a database, disk size and disk free space, and it estimates when a drive will be at 80% capacity and when it will fill up. With 100's of database servers this report can prioritize and pro-actively identify which server will encounter a problem next and when. Of course the limitation is in bold, drive.

Report Example:

When we began using Mountpoints the report was not as accurate and it needed to be adjusted. We have some internal services that collect the drive size and free space to a central DBA database. Review of this monitoring reveals it is using a WMI Query, a quick review of the WMI SDK shows another call that will pick up mountpoints, Select * from WIN32_Volume. Life is good.

Not so quick after hurdling from drives to drives and mountpoints, a problem was revealed where the WMI call failed on two servers. Englightenment. These two sql servers have also been giving us odd issues with SQL Management Studio (SSMS), which is highly dependent on WMI, SMO, .NET and probably some other stuff. Fixing WMI on these two servers fixed the issue and corrected the SSMS issues.

Steps to fix WMI (Thanks to our Windows Team for the steps below):

1. net stop winmgmt

2. del %SystemRoot%\System32\WBEM\Repository /s /q

If that does not work, then I

1. remove all rights from %system32%\wbem\Repository\FS

2. disable the "Windows Management Instrumentation" service

3. reboot

4. add rights back to %system32%\wbem\Repository\FS

5. delete the contents of %system32%\wbem\Repository\FS

6. set the "Windows Management Instrumentation" service back to Automatic

7. start the "Windows Management Instrumentation" service

Here is a short quick Visual Basic Script (VBS) you can save to a text file with a .VBS Extension to see the call to WMI to check disk space for mount points or drives. It filters out certain mount points for Polyserve as we don't want to monitor those, also for some reason z:\ is mapped in our environment and this wmi script pulls that with nulls, so you need to test for those. You can also use Powershell, but it is using a wmi call underneath the hood as well, and we have yet to install powershell on all our servers. Set DiskSet = GetObject("winmgmts:{impersonationLevel=impersonate}!//BCCMAPP02")_

.ExecQuery("Select * from Win32_Volume")

For Each objItem In DiskSet

Ignore = False

if len(objitem.name) >= 51 then

If UCase(Left(objitem.name,51)) = _

UCase("C:\Program Files\PolyServe\MatrixServer\conf\mounts") Then

Ignore = True

End If

End If

If Ignore = False Then

msgbox(objItem.Name & vbCrLf & "Percent Free = " & _

round((objItem.freespace/objItem.Capacity)*100,2) & _

" = " & objItem.freeSpace & " = " & objItem.Capacity)

End If

Next |

|

Blog Home

|

|

|

|