|

| Recent Posts | - May, 2025-5,(1)

- April, 2025-4,(1)

- January, 2025-1,(1)

- July, 2024-7,(1)

- May, 2024-5,(2)

- May, 2023-5,(1)

- February, 2023-2,(1)

- November, 2022-11,(1)

- July, 2022-7,(2)

- March, 2022-3,(1)

- November, 2021-11,(2)

- August, 2021-8,(2)

- July, 2021-7,(2)

- June, 2021-6,(1)

- May, 2021-5,(1)

- March, 2021-3,(1)

- February, 2021-2,(2)

- January, 2021-1,(7)

- December, 2020-12,(3)

- March, 2020-3,(2)

- February, 2020-2,(1)

- December, 2019-12,(2)

- November, 2019-11,(1)

- October, 2019-10,(1)

- September, 2019-9,(1)

- August, 2019-8,(1)

- May, 2019-5,(1)

- April, 2019-4,(2)

- March, 2019-3,(2)

- December, 2018-12,(1)

- November, 2018-11,(4)

- July, 2018-7,(1)

- May, 2018-5,(3)

- April, 2018-4,(2)

- February, 2018-2,(3)

- January, 2018-1,(3)

- November, 2017-11,(2)

- August, 2017-8,(1)

- June, 2017-6,(3)

- May, 2017-5,(5)

- February, 2017-2,(1)

- December, 2016-12,(1)

- October, 2016-10,(2)

- September, 2016-9,(1)

- August, 2016-8,(1)

- July, 2016-7,(1)

- March, 2016-3,(2)

- February, 2016-2,(3)

- December, 2015-12,(5)

- November, 2015-11,(5)

- September, 2015-9,(1)

- August, 2015-8,(2)

- July, 2015-7,(1)

- March, 2015-3,(2)

- February, 2015-2,(1)

- December, 2014-12,(4)

- July, 2014-7,(2)

- June, 2014-6,(2)

- May, 2014-5,(3)

- April, 2014-4,(3)

- March, 2014-3,(1)

- December, 2013-12,(2)

- November, 2013-11,(1)

- July, 2013-7,(1)

- June, 2013-6,(2)

- May, 2013-5,(1)

- March, 2013-3,(3)

- February, 2013-2,(3)

- January, 2013-1,(1)

- December, 2012-12,(3)

- November, 2012-11,(1)

- October, 2012-10,(1)

- September, 2012-9,(1)

- August, 2012-8,(1)

- July, 2012-7,(6)

- June, 2012-6,(1)

- April, 2012-4,(1)

- March, 2012-3,(3)

- February, 2012-2,(3)

- January, 2012-1,(4)

- December, 2011-12,(3)

- October, 2011-10,(3)

- September, 2011-9,(1)

- August, 2011-8,(10)

- July, 2011-7,(2)

- June, 2011-6,(7)

- March, 2011-3,(2)

- February, 2011-2,(3)

- January, 2011-1,(1)

- September, 2010-9,(1)

- August, 2010-8,(2)

- June, 2010-6,(1)

- May, 2010-5,(1)

- April, 2010-4,(3)

- March, 2010-3,(2)

- February, 2010-2,(3)

- January, 2010-1,(1)

- December, 2009-12,(3)

- November, 2009-11,(3)

- October, 2009-10,(2)

- September, 2009-9,(5)

- August, 2009-8,(3)

- July, 2009-7,(9)

- June, 2009-6,(2)

- May, 2009-5,(2)

- April, 2009-4,(9)

- March, 2009-3,(6)

- February, 2009-2,(4)

- January, 2009-1,(10)

- December, 2008-12,(5)

- November, 2008-11,(5)

- October, 2008-10,(13)

- September, 2008-9,(10)

- August, 2008-8,(7)

- July, 2008-7,(8)

- June, 2008-6,(12)

- May, 2008-5,(14)

- April, 2008-4,(12)

- March, 2008-3,(17)

- February, 2008-2,(10)

- January, 2008-1,(16)

- December, 2007-12,(6)

- November, 2007-11,(4)

|

|

|

| Missouri Pecans | 5/21/2025 7:39:33 AM |

| | 2025 Pecan census = 40 pecan trees.

Expecting about 20 to have nuts this year.

Peruque has been the first to bear, followed by Kanza, pawnee and colby.

Below is a Peruque pecan.

Pollination!

Comparison of Missouri Peruque to Southern Georgia pecan variety unknown

|

| | rbio_await_response | 4/11/2025 5:34:48 AM |

| | Began seeing a new wait time for Azure SQL Server on hyperscale tier, rbio_await_response.

It is not affecting performance, but does show up as a wait time with no sql statement associated with it.

Response from Microsoft is that this was the result of a recent patch and they are researching.

Logins coming from user {machine}\\{windows fabric user} or NTAUTHORITY\SYSTEM and they are related to some of our internal tasks like Backup or running other background tasks. You may see the same logins in sp_who2 running system threads as well. There’s no negative performance on the db because of this wait type and the queries coming from System user and the program name Fabric:/Worker.Vldb.Compute/facb87701e95. In fact, Fabric:/Worker.Vldb.Compute/facb87701e95 is basically the HS behind the covers.

We are working internally and exploring available options to correct this behavior.

A copilot generated image, including odd spelling:

|

| | Stop using locking hints in Azure SQL | 1/17/2025 6:03:08 AM |

|

Once habits are hard to stop.

Teams begin using with (nolock), holdlock and they just keep using.

Mostly leftover if you can believe from sql 2000 based DBAs, yep that long.

Developers don't understand locking, let alone new enhancements to it in azure sql or sql 2025.

There may be a reason to use a lock hint, but usually when I ask, there is no reason given other than "this is the way we have always done it."

Even before the new locking optimizations teams were advocating to STOP with the locking hints.

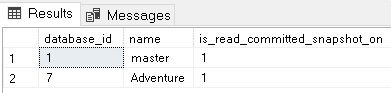

RCSI configuration is ON by default SQL Server has many isolation levels to control the integrity of the data. However, in read-heavy applications, many developers have the terrible habit of using NOLOCK on all statements to avoid the contention of creating lock records on the server. The developers ignore the risk of incorrect results. Over the years, Microsoft created a solution for SQL Server: Two new isolation levels, RCSI (Read Committed Snapshot Isolation) and Snapshot Isolation; both also called optimistic isolation levels. RCSI is considered a special isolation level. It’s different from the other isolation levels because RCSI is configured as a database property. There is no need to change the code. Every transaction that arrives on the server using Read Committed isolation (the default) is converted to Snapshot Isolation. I’m using quotes here because it’s not exactly Snapshot Isolation. RCSI has some differences from Snapshot Isolation, and that’s why they are considered two different isolation levels. The point is: Azure SQL uses RCSI by default. This is a big change for all existing applications. Any use of NOLOCK that you have in existing applications becomes useless and a problem for the application because it brings the usual issues of NOLOCK with no benefit, since the use of RCSI replaces all the possible benefits of NOLOCK. You can check this default on Azure SQL running the following query: | select database_id,name,is_read_committed_snapshot_on from sys.databases |

Migrating applications to Azure SQL and using RCSI are great opportunities to eliminate all NOLOCKs still found in your SQL code.

|

| | Crowdstrike BSOD reboots corrupts PowerBI Config files | 7/24/2024 6:24:50 AM |

| | Recent crowdstrike issue causes BSOD.

Teams began repeatedly rebooting servers to try and correct.

Eventually the issue with crowdstrike is resolved, but now power bi report server service will not start.

On review the web.config and office.config and several other .config files were corrupt, restored from other server / backup.

Service still had issues, so had to run repair service.

Fun times... |

| | AJ Graduation 2024 | 5/30/2024 1:14:57 PM |

| | AJ Graduation 2024

All 3 boys have completed the work...

|

| | No posts for 1 year | 5/30/2024 1:03:36 PM |

| | Goal accomplished, no posts for 1 year ! |

| | Visual Studio IIS Debug web project | 5/29/2023 12:44:58 PM |

| | Sometimes the debugger won't launch.

Make sure visual studio started as an admin

Sometimes permissions are lost. elevated command prompt

C:\Windows\Microsoft.NET\Framework64\v4.0.30319\Aspnet_regiis.exe -ga domain\user

C:\Windows\Microsoft.NET\Framework64\v4.0.30319\Temporary ASP.NET Files

|

|

|